Industry

AI Startup / Data Analytics / Business Operations

Client

Logic AI

Designing Human-Centered AI for Operational Scale

Overview

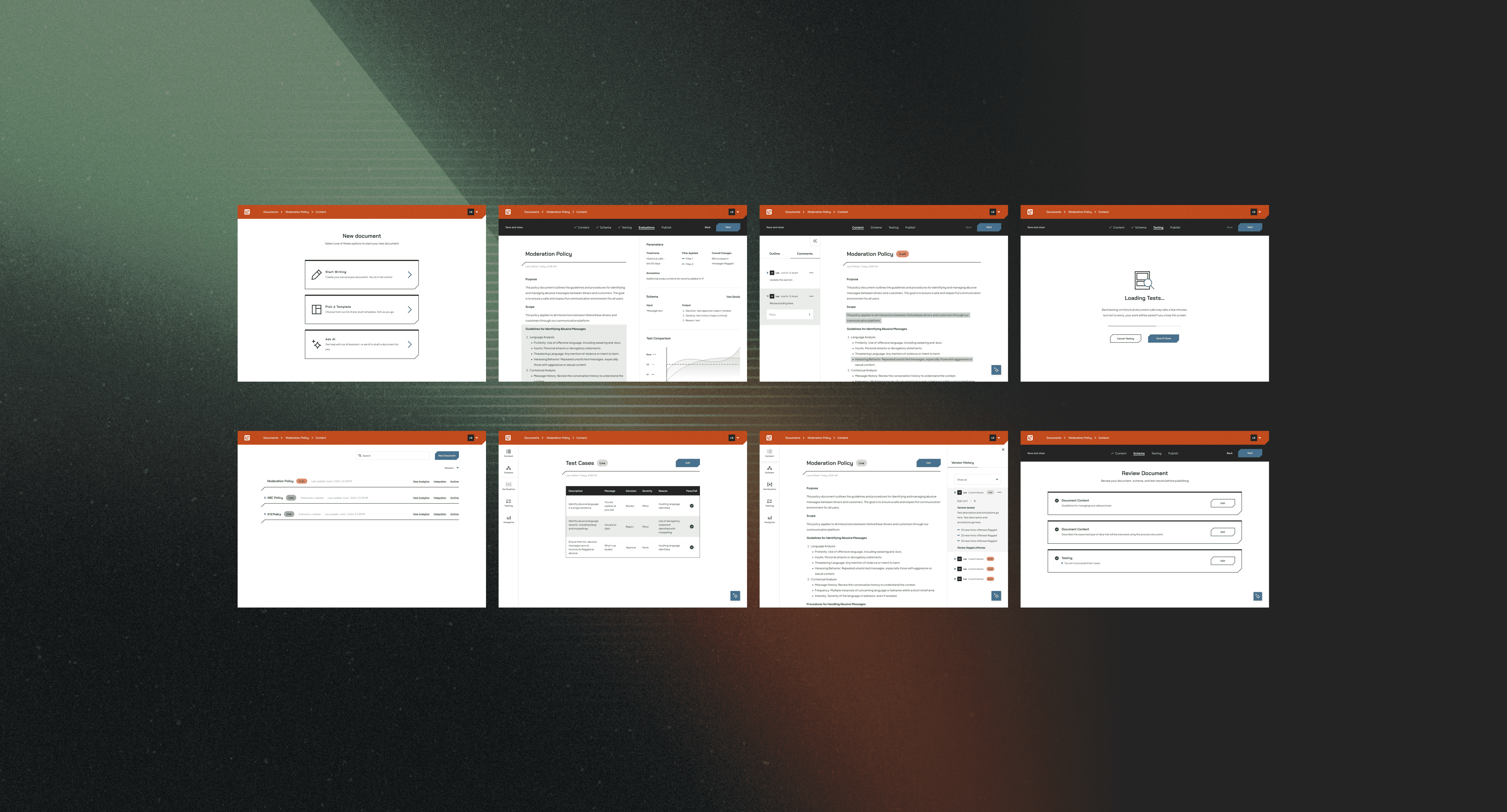

Logic is an AI-powered platform that helps teams turn policies, guidelines, and institutional knowledge into structured workflows that can be applied consistently at scale. I joined at zero, with no code written and nothing designed, and led the UX and experience strategy through to MVP launch. The core challenge wasn't building an AI product. It was designing one that non-technical users could actually trust. These were teams responsible for real decisions, working with a technology that could feel unpredictable and opaque. Making that trustworthy meant doing something harder than enabling outputs. It meant making the space between input, logic, and output legible, so teams could understand what the model was doing, catch where it was wrong, and refine it over time with confidence.

My Role

Led experience design from zero to MVP launch, owning end-to-end strategy and execution.

Facilitated product definition workshops with the full team to establish scope, prioritize features, and align on a shared direction before design began.

Defined the information architecture across process documents and evaluation flows, creating the structural logic the product was built on.

Designed core interaction patterns for the key user behaviors — creating, testing, and refining AI-driven logic in a complex, multi-state environment.

Worked in close collaboration with a visual design lead, maintaining a clear division of ownership: marketing and brand visuals on their side, interaction design and product thinking on mine.

How might we enable teams to turn plain-language intent into production-ready AI agents that can be tested, refined, and trusted in real-world use?

The Problem

Teams responsible for moderation and quality control often rely on a combination of static rules, manual review, and inconsistent judgment calls. As edge cases increase, these systems become difficult to maintain, leading to unclear outcomes and reduced trust in the process. While large language models introduced a new level of flexibility, they also introduced new challenges. Outputs could feel opaque, inconsistent, or difficult to debug. For non-technical users in particular, there was no clear way to understand why a decision was made or how to improve it. The core issue wasn’t just enabling AI to make decisions — it was creating a system where people could understand, guide, and trust those decisions over time.

Key Constraints

Approach

One of the harder design problems wasn't visual — it was conceptual. Early on I had to genuinely wrestle with how schemas, evaluation runs, and historical test sets actually worked before I could design for them. That process turned out to be an asset: the gaps I hit as someone learning the system were often the same gaps non-technical users would hit in production. Designing through that confusion, rather than around it, shaped how much the final experience prioritized explainability over assumed fluency. I approached the product as a system for shaping and evaluating AI behavior, rather than a traditional interface. The core experience centered around “process documents” that combined structured guidelines with natural language instructions, allowing teams to define how the model should interpret different scenarios. To make this system usable, I focused on exposing the relationship between input, logic, and output. Users could test real examples against their documents and see how the model responded, creating a tight feedback loop between definition and evaluation. This transformed the experience from writing static rules into actively shaping behavior through iteration. Because trust was critical, the interface emphasized clarity and traceability. Outputs were presented alongside the relevant portions of the document, helping users understand how decisions were being made without requiring deep technical knowledge. This reduced the “black box” effect and made it easier to identify gaps, edge cases, and opportunities for refinement. The system was also designed to support continuous improvement. Rather than treating documents as fixed, the experience encouraged ongoing updates based on real-world inputs, allowing teams to evolve their logic as new patterns emerged.